top of page

The Overstim Sim

Touchdesigner Lead

Duration: 6 Months

Team Members

Original Concept

Lumira: A LAES Mixed-Media Show

Background Information

Our task was to create a self-contained 3-4-minute long interactive and immersive show that was able to demonstrate the technological capabilities of the Expressive Technologies Studio in the newly built William & Linda Frost Center located at the heart of Cal Poly's campus.

The original theme was sustainability and the 5 elements.

Lumira Logo

William & Linda Frost Center

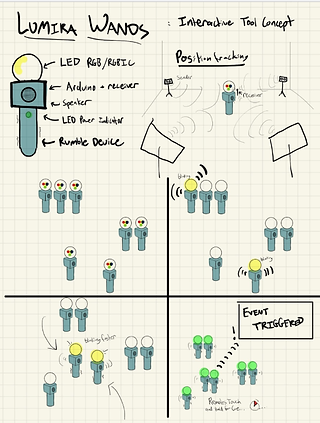

Original Method for Interactivity Integration

Motion Tracking Wand

Computer Vision

3D Print

Testing

Reworking Lumira into The Overstim Sim

The Overstim Sim guides the user through an abstract representation of what it is like to experience a sensory overload resulting from a variety of triggers. The goal of the project is to utilize advanced interactive technologies in the creation of a mixed-media mixed reality experience that illuminates overstimulation and how it can be potentially avoided by identifying your triggers as well as being more conscientious and understanding of what may be triggers for others.

New Method for Interactivity Integration

Interactive's TouchDesigner & Google's MediaPipe Module

NDI Tools

Screen Capture & Webcam

I used NDI Tools to get the input and output live video feed. NDI tools on Windows has two particularly useful applications. NDI screen capture which allows me to present a computer display or a webcam as an NDI output. In TouchDesigner, I use the node NDI In and set its source to integrating camera, though it could be the name of any webcam that I have hooked up to my laptop. NDI Webcam, on the other hand, allows me to designate an available NDI source (up to 4) as the video input for another application, such as Zoom. An NDI source can be created in TouchDesigner using the "NDI Out" node and giving it a source name such as "FinalOut". Then in NDI Webcam, I can set video3 to be "FinalOut".

The Final Product

Prologue

The first part of the Overstim Sim experience is a 50 second long scripted prologue in which the user is given a general description of what overstimulation and sensory overload is and how it affected certain students at Cal Poly as well as potentially the user themself

Home Screen

In the home area, the user is surrounded by five colored squares with a simple vector design. From here, the user can enter each of the five filters, with each filter relating to one of the five senses (taste, sound, sight, touch, and smell). The program can only track one hand at a time, whichever hand is currently being tracked will be identified via a red dot below the user’s middle finger. With the hand that is currently being tracked, the user can select a filter to enter by hovering over one of the squares and making a closed fist for 2 seconds. When the user’s hand is hovering over a square, hand landmarks will be drawn. There is also text in the bottom left corner of the screen that indicates whether the user’s hand is open or closed. After entering a filter and returning to the home screen, the square relating to the recently entered filter will no longer appear on screen.

Yellow Area

This is the 'NDI In' node which gets the unfiltered live video feed from a webcam and a script node which handles restarting the program and enabling/disabling cooking to conserve GPU, maintain a solid frame rate, and limit lag as much as possible.

Purple Area

This is a series of 'NDI In' nodes for each of the filters, 'moviefilein' nodes for the prologue and epilogue videos, and the home area all in to a switch node which has an index value that determines which of the inputs to display as the output video feed.

Red Area

This is a complex series of select and logic chops which take the information provided by MediaPipe (the x, y coordinates of the user's middle finger) and determining whether or not their hand is currently over one of the squares. If true, then a circle will spawn and decrease in size until 3 seconds have passed, then the selected filter will be entered.

Brown Area

This is the second script which uses MediaPipe to handle hand tracking and has code written which determines whether the user has their hand over a square, has their hand open or in a fist, how long their hand is closed, and which filter to switch to depending on which filter has been selection.

Blue Area

This is the input for the icons and squares that can be selected to enter each filter.

White Area

This is the input and output for the prologue and epilogue audio.

Green Area

These are the container nodes for each of the five filters.

Taste Filter

The taste filter functions by tasking the viewer with closing their mouth around the objects on the screen. Each food object corresponds with visuals that represent overstimulation, such as a brain freeze. Using TouchDesginer, we were able to track the state of the viewers mouth, and react to them closing their mouth around each food icon.

Red Area

This is a complex series of select and logic chops which determines whether the inside of the user’s mouth is over one of the objects and if each of the three objects is within the lower portion of the user’s face.

Blue Area

This is the script node which contains around 300 lines of code. It uses MediaPipe to create a face mesh of the user to highlight their lips. The code tracks the location of the user's mouth and determines whether their mouth is open or closed. When the user hovers their open mouth over one of the three food icons on screen and then closes their mouth on top on it, it will trigger the proper effect to occur. The code also keeps track of the filter’s end condition and sends the user back to the home screen when that end condition is met

Yellow Area

These are the four different backgrounds used in this filter

Green Area

These are the three objects the user can eat.

White Area

This is a container named 'Spicy'. The image on the far right shows what it contains. The nodes within this container handle the audio visual effects that occur when an object is eaten.

Brown Area

This is the input for the unfiltered live video feed and a series of tops that use my laptop’s NVIDIA graphics card to isolate the user from the background.

Hearing Filter

The hearing filter tasks the viewer with following along with different body poses that correspond with different notes. When the viewer completes the sequence, a song begins to play. This gives the viewer a challenge, similar to how following along with an auditory experience such as a conversation can be challenging when in sensory overload.

Green Area

This is the input and output for the song audio

Red Area

This is the input and output for the piano notes audio.

Blue Area

This is the input for the white figures that will appear on screen after two correct notes have been played. They are all put into a switch node which has an index value that determines which of the figures to display on screen

Yellow Area

This is the input for the visual key at the bottom of the screen that displays the note sequence’s the user needs to play and which poses correlate to each note.

White Area

This is the script node. It contains just under 900 lines of code and uses MediaPipe for pose detection. It stores a sequence of notes played and which poses correlate to which musical notes. When the user makes one of the 8 poses other than 'null', the piano audio for that note will be triggered and a note will be added to the sequence. The code can also track whether the user has jumped or not. When the user jumps the previously played note is copied and added to the sequence. If the user plays an incorrect note or has completed one of the three note puzzles, the sequence is erased so that the user may start over.

Sight Filter

The image on the left shows the highest level view of the vision filter, which operates by switching between 3 filters, which can be “entered” by the user lining their eyeballs up with a circle that floats onscreen. This filter is driven by the script TOP which uses MediaPipe for face detection. It tracks each eye’s position, as well as the space in between the eyes as the trigger for when the eyes overlap with the circle, triggering an animation to begin. The green nodes are CHOPS (Channel Operators), which track the xy position of the eyeballs and center of the eyes in screen space. As shown in the image on the right, the animation that is shown is blended between using a switch top, and additional code in the script interpolates values as the circle is shrinking to blend between filters. 60 fps is able to be preserved by passing the video input through a resolution scaling to ½ size.

Touch Filter

To represent touch overstimulation, cloning is supposed to represent claustrophobia and crowdedness. There are overlaid colorful effects that get more intense over time, encouraging the user to move, which will only make the scene more crowded and overstimulating. To stop the experience, I implemented a creative way of detecting if there was significant motion in the image, what I am trying to make mean “take a step back and slow down” to calm overstimulating thoughts. I used the video stream and comped it with a subtractive filter with the video stream from 4 frames ago, using a cache TOP again. By doing this, you can tell which pixels changed value int he last 4 frames, or in other words where movement occurred.

Image Below Video

This is a high-level view of the touch filter. This filter utilizes a feedback loop and a cache top so that every nth frame can be selected and used to infinitely loop motion resulting in a cloning effect.

Top Left Image

This is the core of the infinite looping. A feedback loop works by inputting a frame into a feedback TOP, which simply tells the node the correct resolution. Then, pass the same stream that the feedback loop TOP was fed into a composite TOP, and finally, drag the comp TOP onto the feedback TOP, which will assign the comp as the feedback TOP’s target top. What that means is that the feedback now accumulates over time, it stores all previous frames and concatenates it in the comp operation. The addition of the cache top here allows frames to be selected, so instead of concatenating every frame together, it can go n frames into the past and have delayed and infinitely repeated motion. The composite operation here is to take the minimum value, so it works best with back lighting. The operation can be switched to maximum if there is lighting in front.

Top Right Image

To tell if motion has been happening across many frames, and a sufficient amount to play the experience, another feedback loop allows for the analyzation of more frames of motion appended together. The green topto1 chop node analyzes the rgb levels of the TOP of interest

Middle Images

To preserve user's image as the experience gets more chaotic, another comp overlays the ghostly white feedback-on-motion effect, so that the user can be seen over all the copies. As the experience goes on longer, it slowly switches to a remapping of that feedback with highly amplified and grainy noise, meant to mimic the static sensation one feels when a limb falls asleep. In order the clear the chaos on screen, the user must stop moving and stay still for 2 seconds. Upon doing so twice, the user is returned to the home screen.

Bottom Image

These are chop nodes that do a series of calculations to determine if the user has stopped moving.

Smell Filter

The smell filter represents the overstimulation that can occur from scents like cologne. The filter functions by taking the illustrations shown in the image on the far right and using displace and noise to make them warp and move, reminiscent to how scent lines are drawn in cartoons. The warped shapes are then overlaid on top of the user, alongside an image of a cologne bottle in the center of the screen.

Epilogue

Once the user has fully experienced all five filters, a 40 second long scripted epilogue will begin playing in which the user is thanked for having participated in the Overstim Sim Experience, is asked to reflect on this experience, and the overall message/purpose of the Overstim Sim is reaffirmed.

Professional Outreach

Bradon Webb

Creative Director, Experience Designer

On Zoom, Bradon spent an hour looking over our current Touchdesigner files so that he could give recommendations on potential methods for lowering memory and GPU usage in order to deal with lag and framerate issues. Bradon also answered some questions we had about entering the interactive technology field.

Stefan Kwint

CEO of Kwintessens Media

Through a series of messages on LinkedIn, Stefan was the one who recommended we use MediaPipe to integrate interactivity into the project via its capabilities in hand tracking, pose detection, and facial recognition,

Anthony Melo

Senior Software Engineer at Universal

Anthony was part of the team that made the Bowser Jr’s Shadow Showdown experience at Nintendo Land which served as inspiration for the interactivity of The Overstim Sim. He also provided advise on ways to detect when the user was jumping.

Related Works

Museum of Science and Industry

Numbers in Nature

Everything Is Hacked

I made a face-controlled keyboard

Super Nintendo World Japan

Bowser Jr's Shadow Showdown

I made a FULL-BODY keyboard!

bottom of page